COMPANY

Opal Security

DATE

4 Bi-Weekly Sprints

ROLE

Lead Product Designer

DATE

Q2, 2025

OVERVIEW

Background

During a critical transformation from a product-led to a sales-led organization, the Risk Center visualization became the centerpiece feature. Enterprise sales teams relied on to demonstrate the value of Least Privilege Management to potential customers seeking control over irregular access.

Opal Security had built a powerful infrastructure for access management, but their user-facing dashboard was failing to communicate value. Users were overwhelmed by data without context.

Redesign the Risk Center visualization and transform "risky noise" into clear, actionable threat signals CISOs could trust

How can you trust what you can’t measure?

Security teams were paralyzed by an opaque risk scoring system that buried critical threats under a mountain of low-priority noise, making it impossible to distinguish immediate dangers from routine hygiene.

This lack of transparency eroded trust in the data, causing engineers to question the validity of alerts rather than taking action to resolve them.

Key Friction Points

Lack of Trust: Users didn't trust the opaque risk scoring or prioritization logic.

Disjointed Experience: The risk visualization felt disconnected from the remediation workflows.

Confusing Visualizations: Complex charts added cognitive load instead of clarity.

Signal vs. Noise: Critical risks were buried under a mountain of low-priority alerts.

Missing Context: Users couldn't see what they didn't know, leaving blind spots.

Solution

We replaced opaque scoring with transparent, actionable intelligence that empowers security teams to remediate risk proactively.

We surfaced actionable context to drastically reduce the time spent identifying high-risk assets. By providing a clear, direct path to remediation, we empowered users to prioritize critical threats immediately.

This shift enabled security teams to track real progress over time, turning passive monitoring into active defense.

Opportunity Areas

Transparent Scoring: Deconstructing the "black box" to show every input from vulnerabilities to misconfigurations.

Resource Metrics: Top-level KPIs for immediate situational awareness of assets, gaps, and threats.

Threat Isolation: Dedicated views for critical risks like standing access to separate fires from hygiene.

Trend Forecasting: Historical sparklines to track remediation velocity and prove ROI to leadership.

RESEARCH

Research

Jobs mapping exercises revealed a disconnect between the system's data architecture and the user's mental model. Users thought in terms of "Threats," while the system spoke in "Anomalies."

How Might We Questions

How might we increase comprehension?

How might we help users assess, understand and react?

What proportion of access edges were unused?

How many sensitive resources were exposed?

How many anomalies went unaddressed?

Analysis

What We Learned

Security engineers don't trust what they can't verify! That insight drove four focus areas: giving users control, enforcing consistency, removing room for error, and making the value proposition impossible to miss. Here's what we found when we put those principles to the test.

Themes

User control and freedom

Consistency and standards

Error prevention and recovery

Value proposition and goal

Focus Areas

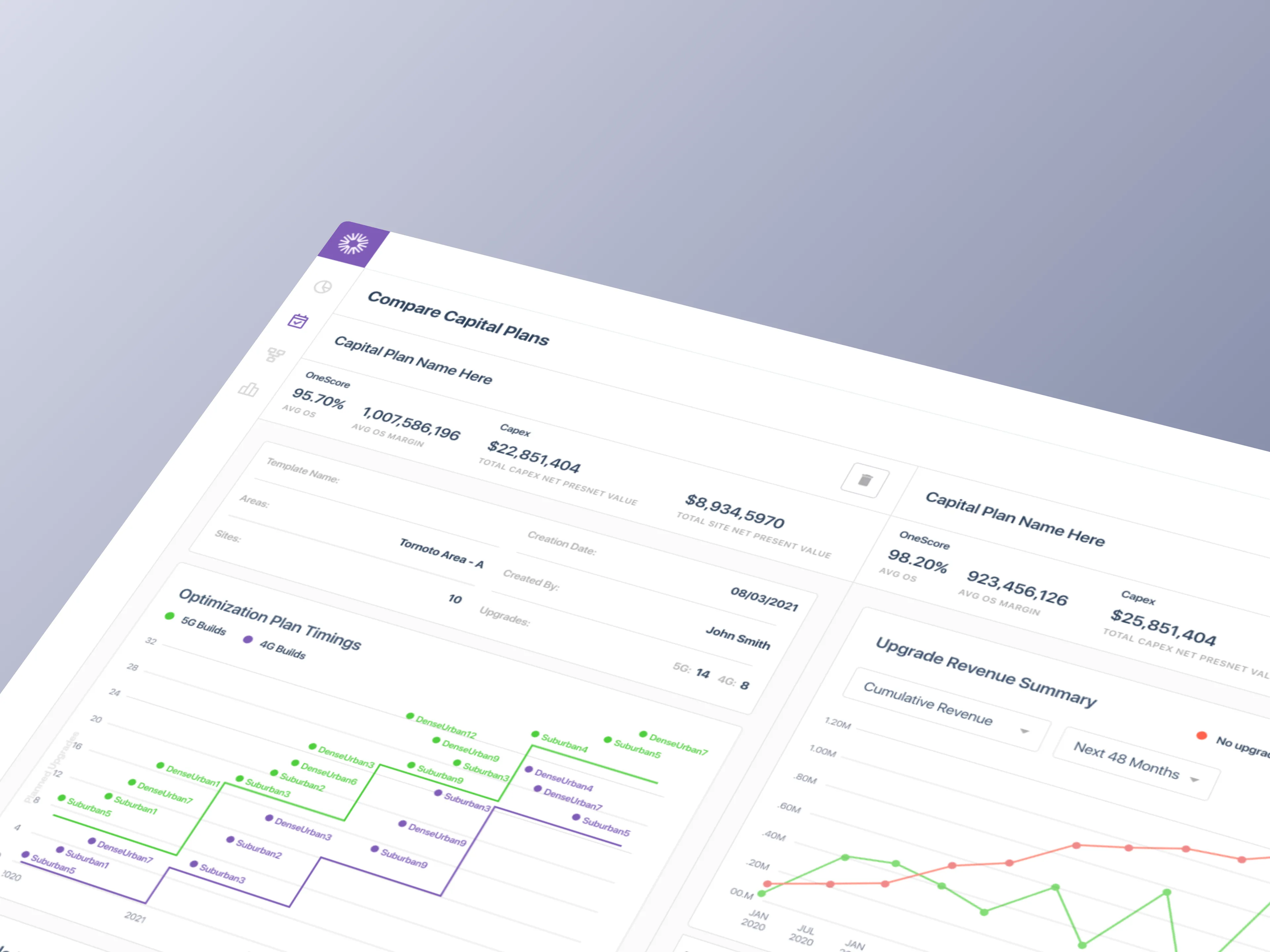

Data Postures: We tested four distinct ways to approach posture: Qualitative, Quantitative, Proportional, and Distributed, to see which mental model resonated with security engineers.

Visualization Audit: We explored complex visualizations like Marimekko charts but found they added cognitive load. We pivoted to standard bar and donut charts for instant readability.

Multiple Iterations: Over three rounds of iterations, the design progressed from establishing the core Risk Center concept, to refining risk score transparency, to optimizing for high-density data display and reducing noise.

Iterations

Sprint Explorations

The iterations weren't linear. We cycled through quantifying risk data, auditing how engineers read visualizations, and stress-testing every assumption across three rounds before the design earned its place.

Sprint 1

Transparent Scoring: We surfaced every input behind the risk score, from vulnerabilities to misconfigurations, so engineers could verify the math and trust it.

Threat Isolation: A dedicated "Critical Risks" view isolated the most dangerous vectors, such as standing access, separating immediate fires from routine hygiene tasks.

Resource Metrics: Top-level KPIs provided immediate situational awareness. Users could see total assets, coverage gaps, and active threats at a glance without digging.

Trend Forecasting: Historical sparklines allowed teams to track their remediation velocity over time, proving the ROI of their security efforts to leadership.

Sprint 2

Predictable Visibility: Moving beyond static snapshots to show where risk is heading, enabling proactive intervention.

Historical Context: Visualizing risk reduction over time to demonstrate the value of security initiatives to leadership.

Actionable Deltas: Subdued green/red indicators highlight immediate changes in risk posture and reduce cognitive load.

Density Testing: Experimented with layout variations to optimize information density and enhance discoverability.

5

Rounds of design iterations

12

Total stakeholder demos

36+

New components tested

Final DEsigns

Clear, Actionable

Risk Posture

Intelligence

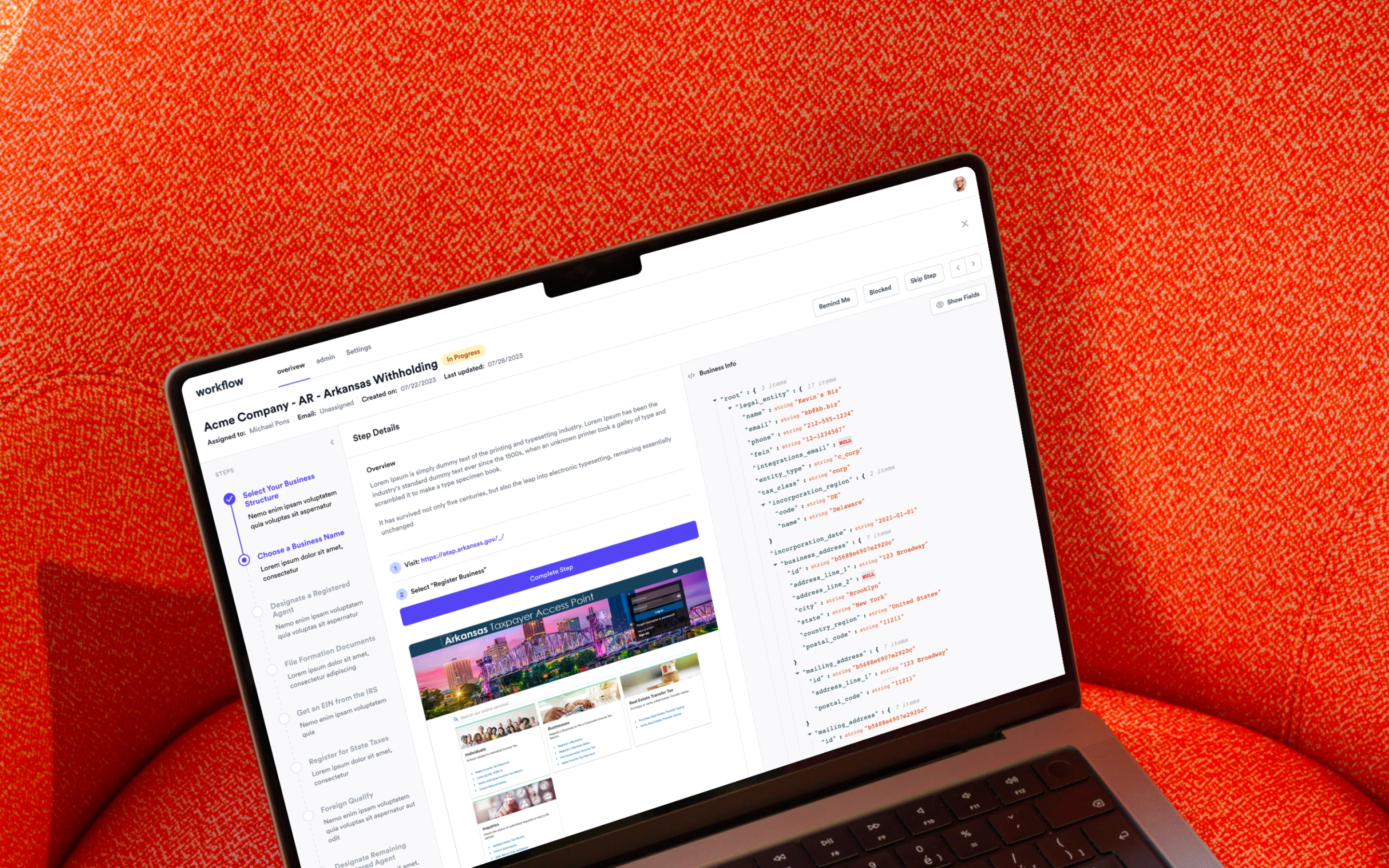

The final rounds of design focused on refining the visual hierarchy, simplifying metrics, and introducing a key new forecasting feature. We introduced the concept of "Critical Risk Threats" and organized them into clear, understandable categories.

The forecasting feature allows users to see the projected impact of their current security posture and the positive effect of using Opal to remediate risks. We tested several high-fidelity variations with users to arrive at the optimal design.

IMPACT Delivered

Measurable, Reliable Improvements

The final Risk Center replaced a single untrustworthy score with three transparent metrics, each backed by trend data that made directional progress visible at a glance. Critical Risk Threats gave engineers a structured way to investigate specific problem areas without context-switching, with remediation suggestions surfaced inline.

What We Delivered

Risk Metrics at a Glance: Three core signals (Permanent Access, Unused Access, and Outside Access) replaced the black box risk score with clear, trackable percentages engineers could act on immediately.

Trend Visibility: Each metric paired with a sparkline chart, giving teams a directional read on whether their security posture was improving or deteriorating over time.

Critical Risk Threats: A dedicated threat category system organized risks into understandable buckets, replacing a single opaque score with specific, labeled problem areas.

Remediation Built In: Threat cards surfaced contextual detail and remediation suggestions inline, so engineers could investigate and resolve without leaving the dashboard.

High-Fidelity Validation Multiple layout variations of the Critical Risk Threats component were tested with users to optimize information density before shipping the final design.

97%

Drastically reduced the time to approve access requests

3 min

Reduced review cycles from 3 days to just 3 minutes

7x

Increased adoption and channel usage by customers